Everyone says they have agents. Most do not. Here is what actually makes an AI system an agent, how agents differ from chatbots and automation, and the formula that turns a language model into something that can build software.

Open any product page in tech right now and you will find the word "agent." AI agents, coding agents, sales agents, support agents. The word has become so overused that it risks meaning nothing.

But it does mean something specific. And the difference between a product that has agents and a product that just calls itself agentic matters — especially if you are building products and need to know what you are actually creating.

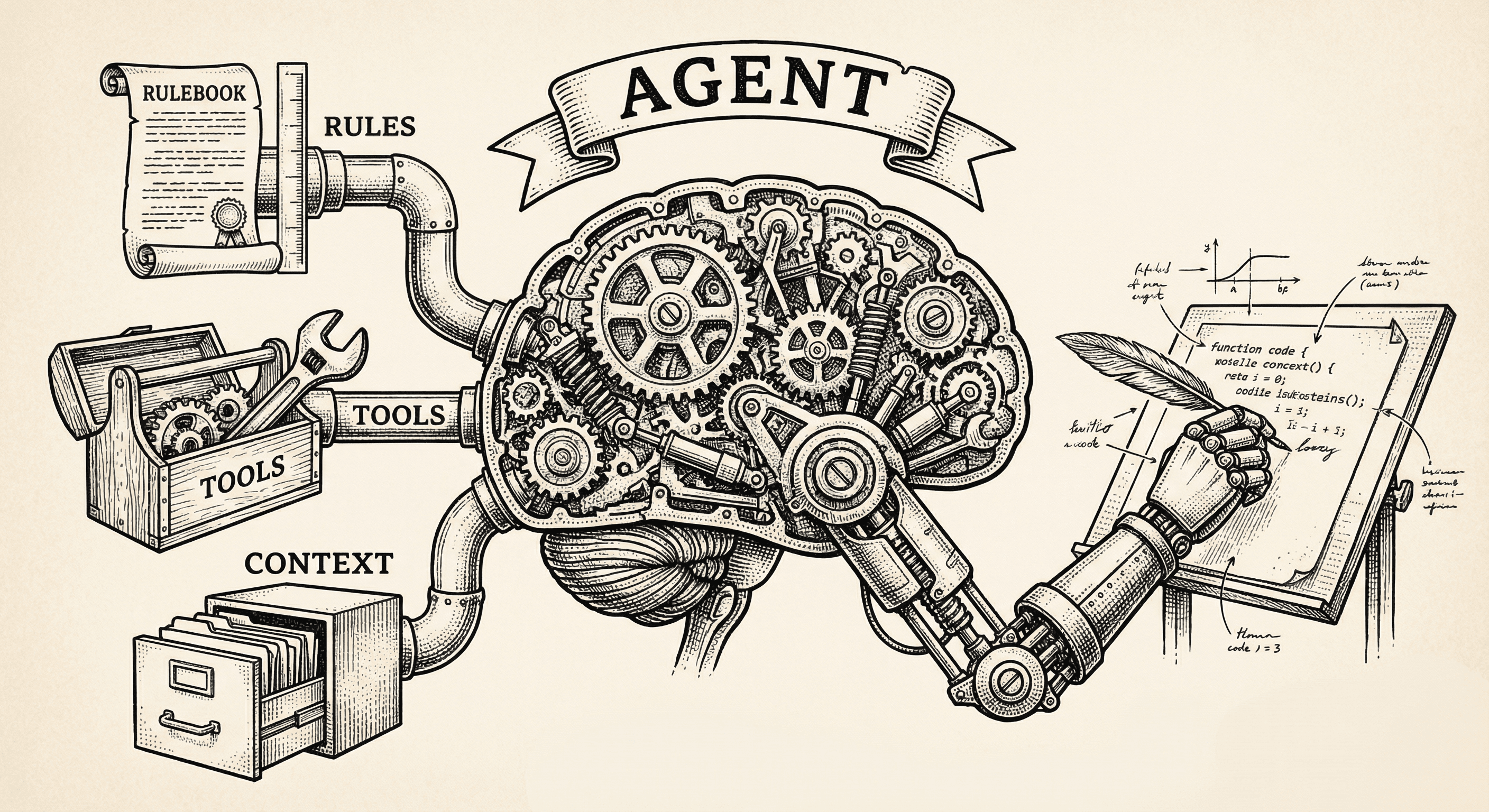

An agent is made of four ingredients:

Rules — what to do, what not to do, how to behave. Security policies, design standards, coding patterns, quality bars. Rules are the operating manual.

Tools — the ability to take action. Reading files, writing code, running tests, calling APIs, generating images, sending emails. Without tools, an AI can only talk. With tools, it can do.

Context — the specific knowledge that makes the agent effective in its domain. Your codebase, your design preferences, your product brief, your user feedback history. Context is what separates a generic AI from one that knows your project.

LLM (the brain) — the language model that thinks. It reads the rules, uses the tools, interprets the context, and makes decisions. Claude, GPT, Gemini — these are the brains.

Rules + Tools + Context + LLM = Agent.

Remove any one piece and you do not have an agent:

These three things get confused constantly. Here is how they differ:

A chatbot answers questions. You ask, it responds. It can be very intelligent — it can explain complex topics, write essays, analyze data. But it cannot do anything. It cannot edit your code, fix your bug, or deploy your site. It can only tell you how.

Think of a chatbot as a very knowledgeable advisor sitting in a chair. Brilliant, but their hands are tied.

Automation follows predefined rules with no judgment. "When a new email arrives, forward it to Slack." "When a form is submitted, save it to a spreadsheet." "When the build fails, send a notification."

The path is hardcoded. There is no thinking. If the situation changes in a way the automation was not programmed for, it breaks or does the wrong thing. Automation is powerful for repetitive, predictable tasks. It is useless for anything that requires judgment.

Think of automation as a conveyor belt. It moves things from A to B reliably, but it cannot decide to take a different route.

An agent perceives, decides, and acts.

It perceives — it reads your codebase, observes that a sign-up flow is broken, notices that a new feature was shipped.

It decides — it determines what to do about what it perceived. Is this a bug or a design choice? Should the fix go in this file or that one? Is this finding critical or minor?

It acts — it writes the fix, runs the tests, generates the report, routes the finding to the right place.

The key difference from automation: the agent encounters situations it was not explicitly programmed for, and it figures out what to do. It uses judgment, not lookup tables.

Think of an agent as a contractor who knows your house. You say "the bathroom is leaking." You do not tell them which pipe, which tool, or which hardware store. They figure it out because they understand plumbing, they know your house, and they have tools.

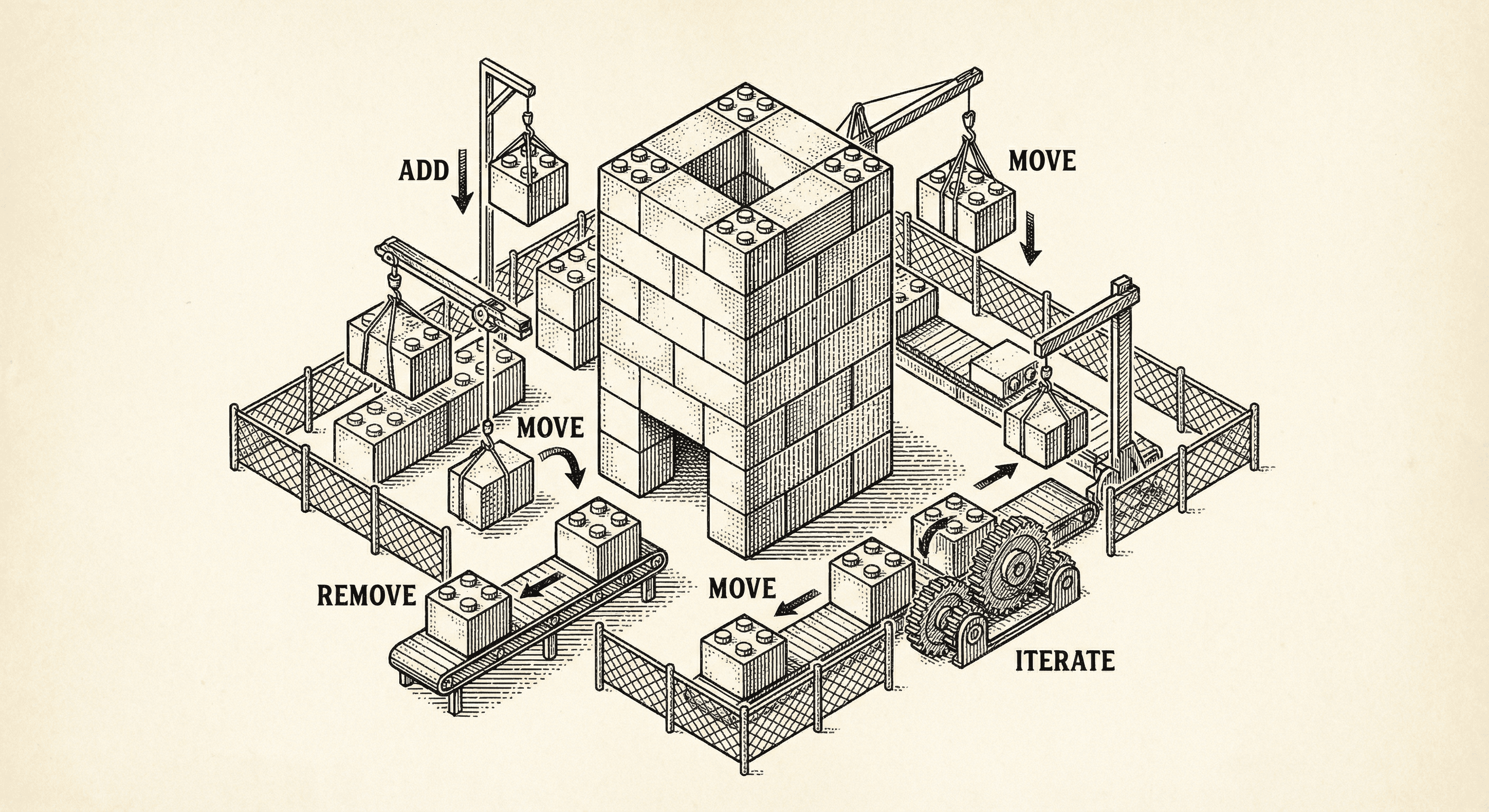

Not all agents are the same. There is a spectrum:

An AI that can take actions, but only when you tell it to. You say "fix this bug" and it fixes it. You say "write a test" and it writes one. It does not act on its own.

This is the most common form of "agent" in products today. It is genuinely useful — having an AI that can edit code and run commands is transformative. But it still requires you at the keyboard, issuing instructions.

An AI that acts on its own when it detects a need. It monitors your product, finds a broken flow, and fixes it without being asked. It notices a feature was shipped and generates a content piece. It scans your code and flags a security issue before you know it exists.

The difference from Level 1: you did not ask it to do anything. It perceived a situation and decided to act.

Multiple autonomous agents working together, each specialized in a different domain, communicating through a routing layer. A QA agent finds a problem. It sends the finding to an orchestration agent. The orchestration agent categorizes it and dispatches it to a build agent. The build agent fixes it and confirms the fix. A content agent notices the fix and generates a changelog entry.

No single human instruction triggered this chain. The agents coordinated among themselves.

If you are building products with AI, here is what matters:

Your product is not an agent just because it uses an LLM. A chatbot that answers questions about your documentation is not an agent. An AI that reviews your code and applies fixes is.

The value is in the specialization, not the brain. The LLM is a commodity — everyone has access to the same models. What makes your agent valuable is the rules, tools, and context you wrap around it. That is your domain expertise, encoded.

Agents get better when they learn. A static agent follows rules. A learning agent follows rules AND adapts based on experience. A builder profile that tracks your preferences, a QA agent that learns which flows break most often, a content agent that learns your voice — these compound over time.

Multi-agent systems are where the real leverage is. A single agent helps you do one thing faster. A system of agents that coordinate with each other lets one person operate what would normally require a team.

Most templates assume you have a plan. Ship Something™ is built for how you actually work — one feature at a time, changing direction, figuring it out as you go. Here is how five architectural layers keep your codebase healthy while you build piecemeal.

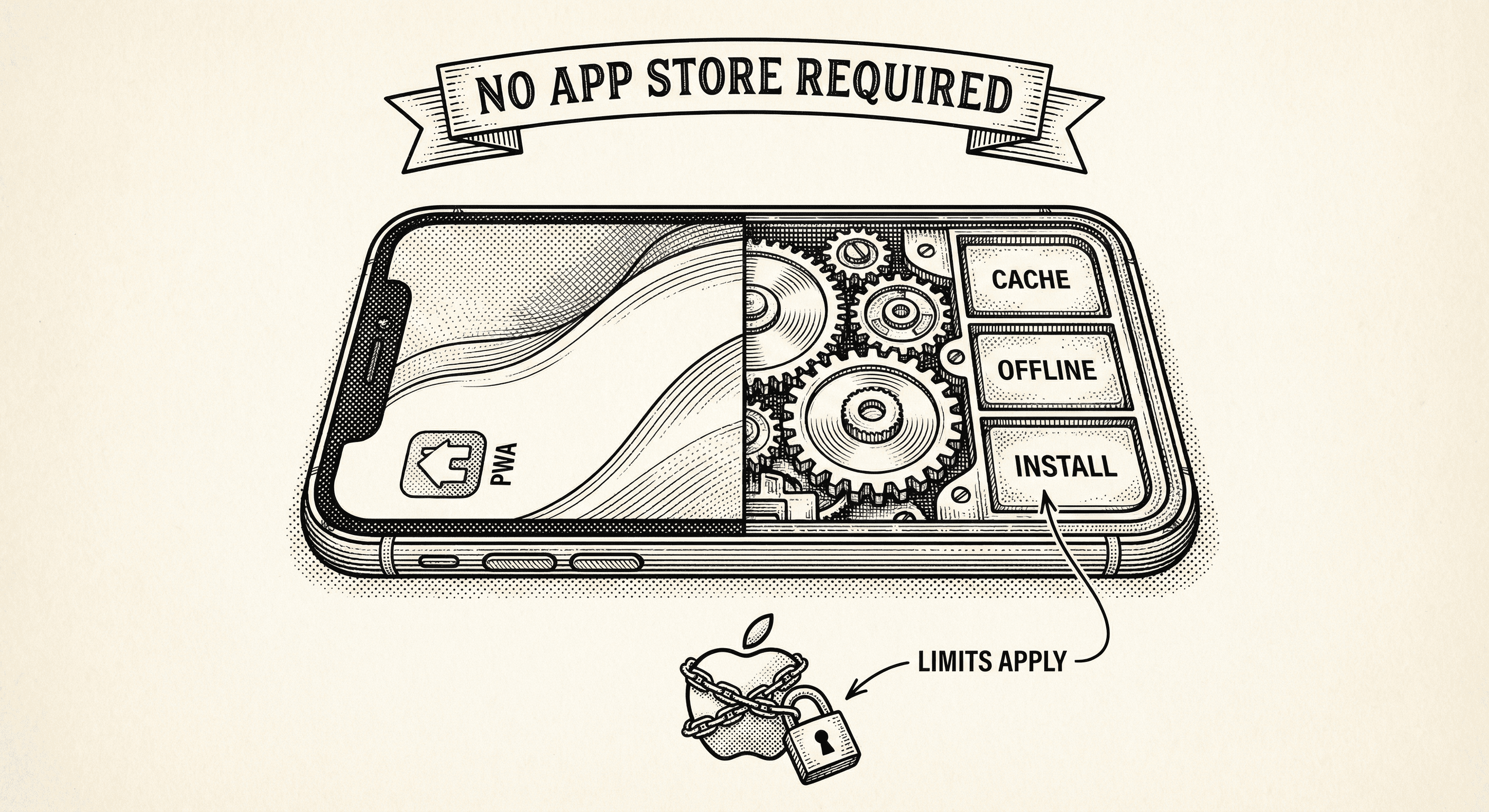

Your web app can install on a phone, work offline, and look like a native app. No App Store approval. No 30% cut. But there is a catch — and it lives in Cupertino.